my bf drew a cute pic 4 my unraid server

Reminds me of when AMD tried to push “megahertz equivalent” numbers during the early 2000s clockrate specwar

It’s really history repeating itself. AMD was totally correct on principle that megahertz as such was a meaningless spec. At the same time, the spec difference symbolized the practical reality that AMD’s computers were in fact slower than Intel’s

hey, they were right the second time they did it

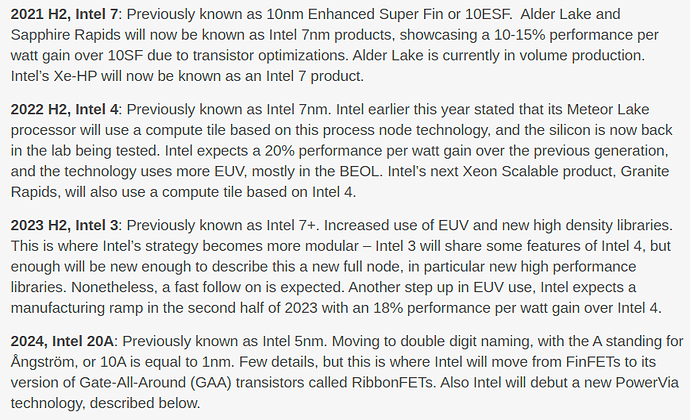

they are absolutely right that TSMC process numbers had been pretty arbitrary for a while (notably the “20nm” process of the switch and the “10nm” of the first gen iPhone X were not real steppings at all, but refinements of others), but Intel being like “we’re renaming our 5nm process to be measured in angstroms” when they still haven’t managed volume production of anything that’s competitive with TSMC 7nm (in logic density or power consumption) almost four years after it’s been shipping is pretty lol

their roadmaps aren’t credible at all and trying to juice the numbers doesn’t look great, at this point TSMC’s “3nm” is like, the reference point, and notice they aren’t trying to sell me upcoming arbitrary atom widths

This isn’t going to matter for most people, but it does for a lot, so take note that the M1 chipset currently does NOT support more than 1 external monitor natively. If you want more than one external monitor, you have to do shenanigans with laptop docks and display->USB-A adapters (yes, USB-A specifically, no USB-C will not work) combined with the DisplayLink driver. The adapter limitation also means you’re running at 30 FPS if the monitor(s) you put on adapter are 4k. And the overall performance you get outside of that is anyone’s guess, since this isn’t an official setup, and you’re doing janky weird work-around stuff.

I’ve gotten conflicting statements and info from different sources on whether this is a driver limitation, or an actual issue with the chipset. Apparently the M1 Mac Minis can drive more displays natively, which makes me think it’s a driver issue? But maybe there is also a fundamental difference in the chipsets/motherboards they use on both. I dunno.

what are “high performance libraries” in this case?

I believe they’re referring to… lithography middleware? the wonders never cease

That’s a bit of semiconductor jargon I’ve never heard before either.

Putting this insider word in a public roadmap might be another attempt to differentiate themselves from the competition by hinting we should care about this “library” thing nobody’s ever heard of before

The libraries are also being described as “high density”, which makes me think they’re talking about libraries of electrical primitives that their CAD tools place — i.e. all the transistors/logic gates, resistors, diodes, capacitors, etc. in the chip itself.

In other words, they seem to be phrasing what they would be doing anyway (making smaller/denser chips) in a jargony way to pretend that they have something special planned.

(granted, if they actually found a way to fabricate small, high-precision resistors on the die itself i’d be mighty impressed)

I think I might be turning audiophile, because from coincidentally listening to them both ways, I’ve started to believe that certain songs (for example, FF7R’s Under the Rotting Pizza and much of Paradise Killer’s OST) are way more satisfying to listen to with my QC35 headphones plugged in instead of running off bluetooth

I don’t know whether the main difference is the DAC or the bluetooth codec though. I also by far prefer being able to move around freely instead of feeling chained by a headphone cable, so I had gone bluetooth-everything, but now I don’t know anymore.

Does anyone happen to have opinions/advice to offer on headphones, bluetooth and DACs? Maybe it’s time for me to upgrade part of my setup. When I’ve got my headphones plugged in I’m using my monitor’s DAC currently but maybe I should try to switch to Apple USB-C DAC I think it was @doolittle who linked an article about at a minimum (not sure if there are also good USB-A DACs I could try, I’d never gotten around to adding USB-C ports to my desktop).

Bluetooth audio is just not very full in my experience – the best-case scenario is native, unresampled AAC on an apple device with airpods, or aptX resampling on another device, and both are only ok compared to a half decent cheap DAC.

the cheapest high end setup for comparison purposes would probably be one of the $100 schiit things and any comfortable headphones that aren’t backless

I see. I’m starting to sense it’s one of those things like low-DPI (and later, low-color-range) monitors where for a decade the entire industry wasn’t bothered enough by the mediocrity to actually solve the standards coordination problem, and we needed Apple’s vertical integration to give them a kick in the pants

The reason I’m using wired audio right now is in order to improve audio latency in Guilty Gear Strive. So in general I’m feeling like Bluetooth is a bad joke

I have the cheapo apple DAC that everyone loves, the hyperX DAC that came with my cheap comfy gaming headphones, and a nice old Akai amp, and I still mostly use Bluetooth audio

Yeah, I have the cheap Apple USB-C to 3.5mm dongle plugged into the now universal USB-C VR port on my GPU

I use it with Sennheiser PC37Xs (low impedance but open back w/ mic). I’ve also used MDR-7506s and HD600s but I don’t want an amp etc.

You might consider a general purpose audio interface (go see the guitar center kids thattaway) like a Scarlet 2i2 which will provide enough juice for high-impedance headphones and come in handy for a variety of other uses.

If you want to stick with the $7 Apple DAC the trick from what I’ve read is low-impedance high-sensitivity i.e. planar magnetic headphones like Hifiman. I haven’t tried these but because of the mix they should be fine without an amp whereas comparable Sennheisers would be quiet.

sup hardware thread, excel keeps hanging and freaking me out so I’m mentally shopping for a 5950x like an idiot.

felix is secretly the heir to the danish royal family

far as I can tell no software is as proficient at running as slowly as Excel, which continues to defy man and god and every CPU core it comes across

You should see the (internal Not-Invented-Here syndrome) corporate bug-tracking software our team decided to give up on and replace with a spreadsheet if you think that

People complain about Excel’s speed for the same they reason they complain about web browser performance and RAM usage. The gigantic engineering investment in improving the performance of the engines just allows for more and more insanely programmed things to successfully run on top of it. (Also to be fair both of these types of software could’ve been architected better in the 90s for what they ended up being used for.)

I believe it! But when my tables that are no more than 10k items with a handful of unique functions can take tens of seconds to calculate over 16 threads…well, I remember my CS classes and my unit tests and I know something is up

Feel like it’s not just int-to-str conversions happening willy-nilly. Probably everything is being converted to a date, Excel’s internal number format, between each handoff